AWS re:Invent is upon us and, having spent the past quarter integrating Amazon Bedrock into production systems across healthcare, financial services, and retail, I want to share what actually matters for enterprise adoption right now. The platform has matured considerably since its general availability in late 2023. The foundation model catalogue has expanded, the managed services around RAG, agents, and safety controls have hardened, and the tooling around cost management has become genuinely practical. This is a practitioner’s guide to where Bedrock stands in December 2024 and the architecture patterns I recommend for teams starting or scaling generative AI workloads on AWS.

The Foundation Model Marketplace: What’s Available and Why It Matters

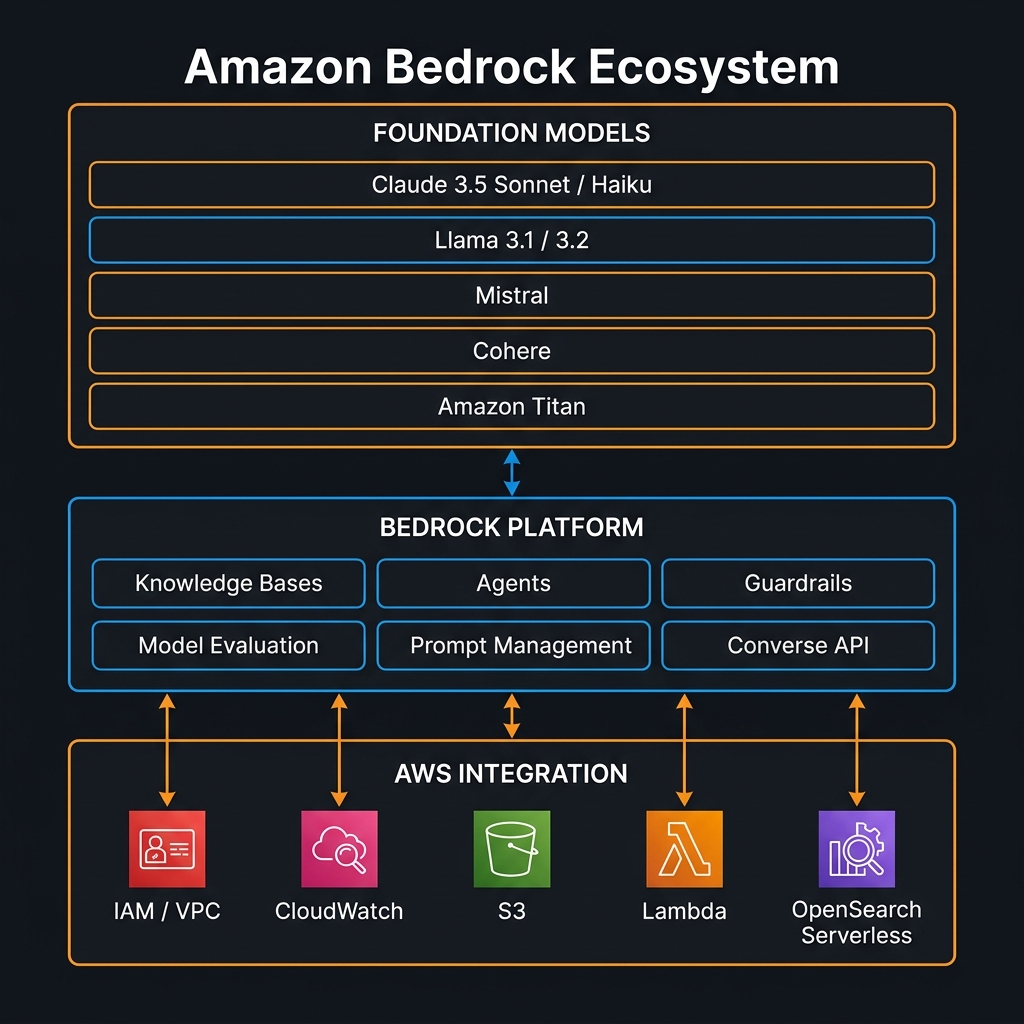

Bedrock’s core proposition is unified API access to foundation models from multiple providers. As of this writing, the catalogue includes Anthropic’s Claude 3 family (Haiku, Sonnet, Opus) and the recently released Claude 3.5 Sonnet and Claude 3.5 Haiku, Meta’s Llama 3.1 (8B, 70B, 405B) and Llama 3.2 (1B, 3B, 11B, 90B including multimodal variants), Mistral AI’s 7B, Mixtral 8x7B, and Mistral Large, Cohere’s Command R and Command R+, AI21 Labs’ Jamba-1.5, Stability AI’s SDXL for image generation, and Amazon’s own Titan Text family (Lite, Express, Premier) plus Titan Embeddings v2.

The new Converse API, released in mid-2024 and stabilised significantly since, deserves specific mention. It provides a truly consistent interface across all text models that support a chat/messages format — you structure your request once using a roles array and it works against Claude, Llama, Mistral, and Titan without provider-specific API surface differences. This is architecturally meaningful: it enables model experimentation and fallback routing without code changes.

import boto3

import json

bedrock = boto3.client("bedrock-runtime", region_name="us-east-1")

def invoke_converse(model_id: str, messages: list, system: str = None, max_tokens: int = 1024) -> str:

"""

Use the Bedrock Converse API - consistent interface across Claude, Llama, Mistral.

Available as of mid-2024; stabilised November 2024.

model_id examples:

anthropic.claude-3-5-sonnet-20241022-v2:0 (Claude 3.5 Sonnet, Oct 2024)

anthropic.claude-3-5-haiku-20241022-v1:0 (Claude 3.5 Haiku, Nov 2024)

meta.llama3-1-70b-instruct-v1:0 (Llama 3.1 70B)

mistral.mistral-large-2402-v1:0 (Mistral Large)

"""

kwargs = {

"modelId": model_id,

"messages": messages,

"inferenceConfig": {

"maxTokens": max_tokens,

"temperature": 0.1,

"topP": 0.9

}

}

if system:

kwargs["system"] = [{"text": system}]

response = bedrock.converse(**kwargs)

return response["output"]["message"]["content"][0]["text"]

# Example: multi-turn conversation

messages = [

{"role": "user", "content": [

{"text": "Summarise the key risks in the attached contract clause: indemnification is unlimited in scope."}

]},

{"role": "assistant", "content": [

{"text": "The unlimited indemnification clause presents three primary risks..."}

]},

{"role": "user", "content": [

{"text": "Draft a counter-proposal that limits indemnification to direct damages up to contract value."}

]}

]

result = invoke_converse(

model_id="anthropic.claude-3-5-sonnet-20241022-v2:0",

system="You are an expert commercial contracts attorney. Provide precise, actionable legal analysis.",

messages=messages

)

Knowledge Bases: Managed RAG That Actually Works in Production

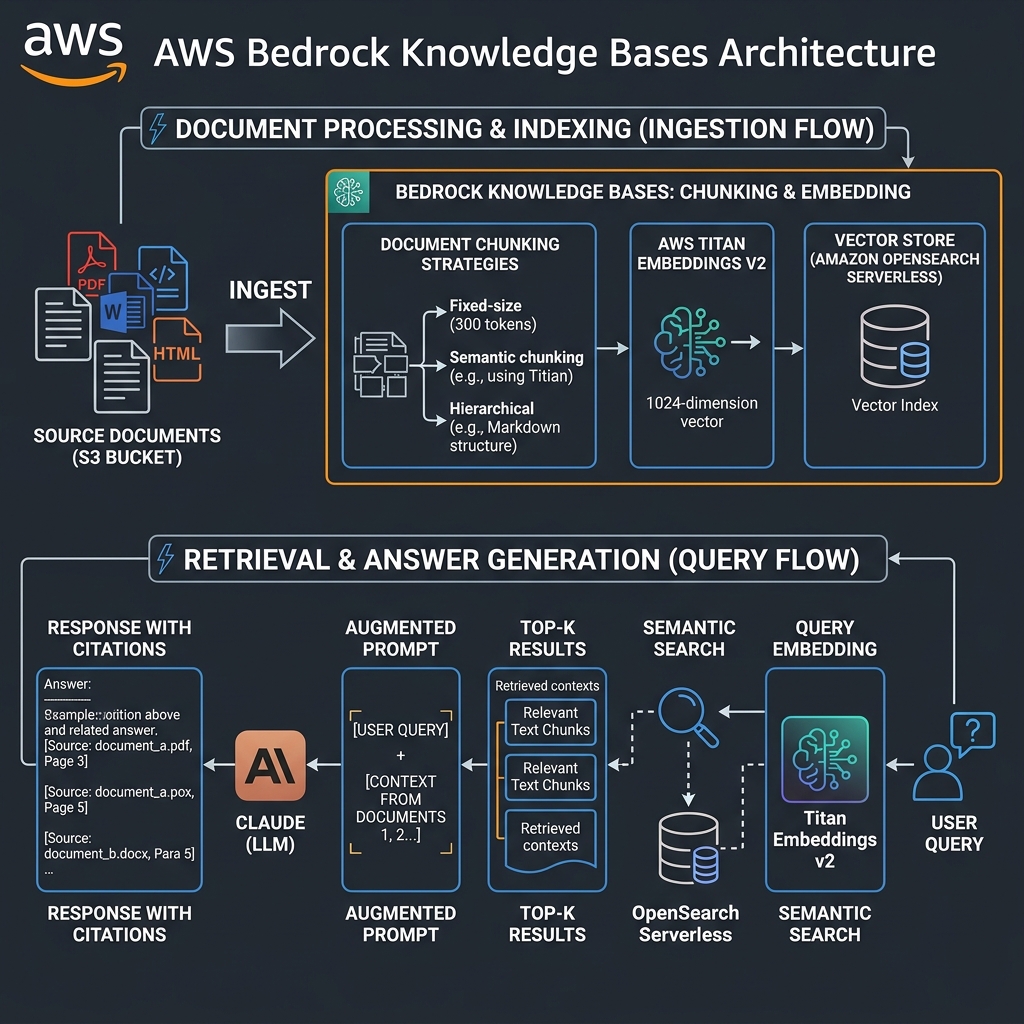

Retrieval Augmented Generation is the architectural pattern that makes foundation models useful for enterprise-specific knowledge. The challenge has never been understanding the pattern — it is building a pipeline that stays accurate as documents change, scales to thousands of simultaneous queries, and integrates with existing security postures. Bedrock Knowledge Bases addresses all three.

You provide an S3 bucket. Knowledge Bases handles document parsing (PDF, Word, HTML, CSV, Markdown), chunking (fixed-size, semantic, or hierarchical), embedding generation using Titan Embeddings v2 or Cohere Embed English v3, and storage in your choice of vector database: OpenSearch Serverless (zero-infrastructure management), Aurora PostgreSQL with pgvector, or externally managed Pinecone or Redis Enterprise Cloud.

Chunking Strategy: The Decision That Most Affects Retrieval Quality

Fixed-size chunking (default 300 tokens with 20% overlap) works well for homogeneous documents with consistent information density — policy documents, product manuals. Semantic chunking groups text by topic coherence boundaries rather than token count; it produces better retrieval accuracy for documents where paragraphs cover distinct concepts, but costs more in embedding compute. Hierarchical chunking — new in 2024 — maintains both a full-document embedding and chunk-level embeddings, enabling hybrid retrieval that selects at the right granularity per query.

In a legal document intelligence deployment earlier this year, switching from fixed-size to hierarchical chunking improved retrieval precision@5 from 78% to 91% on contract clause extraction tasks. The additional embedding cost (roughly 3x for hierarchical vs fixed) was recouped within two weeks through reduced hallucination correction loops.

import boto3

bedrock_agent = boto3.client("bedrock-agent", region_name="us-east-1")

def create_knowledge_base_with_semantic_chunking(

name: str,

s3_bucket: str,

opensearch_collection_arn: str,

role_arn: str

) -> dict:

"""

Create a Bedrock Knowledge Base with semantic chunking and Titan Embeddings v2.

Semantic chunking available in Bedrock Knowledge Bases from mid-2024.

"""

kb = bedrock_agent.create_knowledge_base(

name=name,

roleArn=role_arn,

knowledgeBaseConfiguration={

"type": "VECTOR",

"vectorKnowledgeBaseConfiguration": {

"embeddingModelArn": "arn:aws:bedrock:us-east-1::foundation-model/amazon.titan-embed-text-v2:0",

"embeddingModelConfiguration": {

"bedrockEmbeddingModelConfiguration": {

"dimensions": 1024, # 256, 512, 1024 supported

"embeddingDataType": "FLOAT32"

}

}

}

},

storageConfiguration={

"type": "OPENSEARCH_SERVERLESS",

"opensearchServerlessConfiguration": {

"collectionArn": opensearch_collection_arn,

"vectorIndexName": f"{name}-index",

"fieldMapping": {

"vectorField": "embedding",

"textField": "text",

"metadataField": "metadata"

}

}

}

)

kb_id = kb["knowledgeBase"]["knowledgeBaseId"]

# Create data source with semantic chunking

bedrock_agent.create_data_source(

knowledgeBaseId=kb_id,

name=f"{name}-s3-source",

dataSourceConfiguration={

"type": "S3",

"s3Configuration": {

"bucketArn": f"arn:aws:s3:::{s3_bucket}",

"inclusionPrefixes": ["documents/"]

}

},

vectorIngestionConfiguration={

"chunkingConfiguration": {

"chunkingStrategy": "SEMANTIC",

"semanticChunkingConfiguration": {

"maxTokens": 300,

"bufferSize": 0,

"breakpointPercentileThreshold": 95

}

}

}

)

return kb

Bedrock Agents: Orchestrating Multi-Step Enterprise Workflows

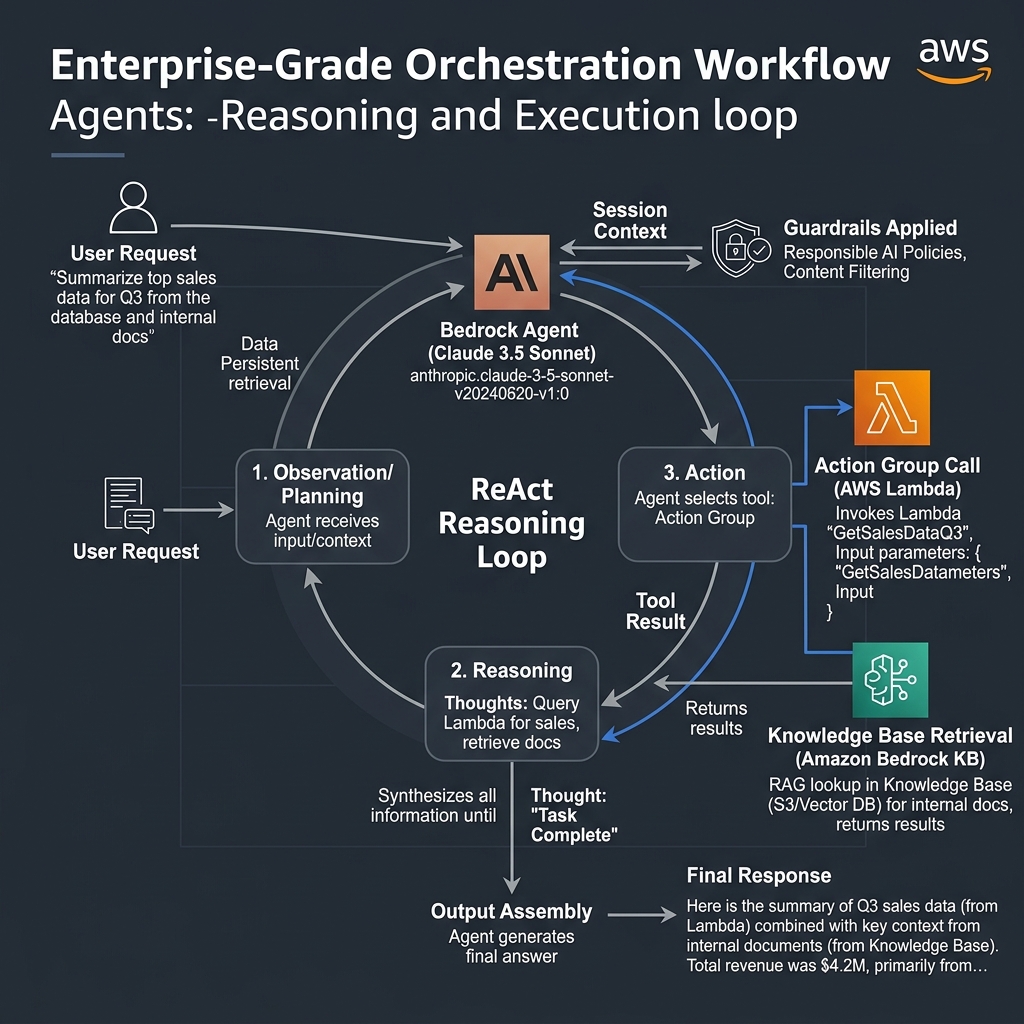

Bedrock Agents brings autonomous task execution to Bedrock. An Agent combines a foundation model with Action Groups — Lambda functions that the model invokes — and an optional Knowledge Base. The model follows a ReAct (Reason + Act) loop: it reasons about the task, selects an action, observes the result, and iterates until the task is complete or it determines it cannot proceed. What makes this production-appropriate is that session state is managed server-side across multiple turns, and every action invocation passes through the configured Guardrails.

The inline agent pattern, released in 2024, allows you to configure agents programmatically at runtime without pre-registering them in the console. This is essential for multi-tenant applications where each tenant’s agent configuration differs — different knowledge bases, different sets of allowed actions, different system prompts reflecting their specific policies. In a B2B SaaS deployment, we used inline agents to give each enterprise customer a logically isolated AI assistant with access only to their own document repositories and API scopes.

import boto3

import json

import uuid

bedrock_agent_rt = boto3.client("bedrock-agent-runtime", region_name="us-east-1")

def invoke_inline_agent(

user_query: str,

tenant_id: str,

knowledge_base_id: str,

lambda_arn: str,

guardrail_id: str,

openapi_schema: dict

) -> str:

"""

Invoke a Bedrock Inline Agent - no pre-registered agent required.

Inline agents available from late 2024 - ideal for multi-tenant SaaS.

"""

session_id = f"tenant-{tenant_id}-{uuid.uuid4().hex[:8]}"

response = bedrock_agent_rt.invoke_inline_agent(

sessionId=session_id,

inputText=user_query,

foundationModelId="anthropic.claude-3-5-sonnet-20241022-v2:0",

instruction=(

f"You are an AI assistant for tenant {tenant_id}. "

"You help users find information and complete tasks using the available tools. "

"Always cite your sources when retrieving information."

),

actionGroups=[

{

"actionGroupName": "EnterpriseActions",

"actionGroupExecutor": {

"lambda": lambda_arn

},

"apiSchema": {

"payload": json.dumps(openapi_schema)

},

"description": "Actions for retrieving and updating enterprise data"

}

],

knowledgeBases=[

{

"knowledgeBaseId": knowledge_base_id,

"description": f"Knowledge base for tenant {tenant_id}",

"retrievalConfiguration": {

"vectorSearchConfiguration": {

"numberOfResults": 5

}

}

}

],

guardrailConfiguration={

"guardrailIdentifier": guardrail_id,

"guardrailVersion": "DRAFT"

},

enableTrace=True

)

final_response = ""

for event in response.get("completion", []):

if "chunk" in event:

final_response += event["chunk"]["bytes"].decode("utf-8")

return final_response

Guardrails: Enterprise Safety Controls for Regulated Industries

Guardrails is the feature that moves Bedrock from “interesting technology” to “deployable enterprise product” for CISOs. A Guardrail configuration specifies: content filters (hate speech, harassment, violence, sexual content, insults — each on a severity scale of NONE to HIGH); PII detection (names, email addresses, phone numbers, SSNs, credit card numbers, AWS keys, and more — with redact, anonymise, or block handling); denied topics (business-specific restrictions, e.g., “do not provide specific investment recommendations”); word filters (custom blocklists, managed profanity lists); and grounding checks (verifying that model responses are grounded in provided source documents, reducing hallucinations).

Guardrails apply in both directions: on the user’s input prompt and on the model’s output. This symmetry is important for adversarial prompt injection resistance. A user attempting to extract PII by phrasing a request as “show me the raw data you were trained on” will be blocked at the input filter, not just at the output.

flowchart LR

UI["User Input"] --> GI["Guardrails

Input Filter"]

GI -->|"Blocked: PII, denied topics"| ERR["Intervention Response"]

GI -->|"Allowed"| M["Foundation Model

Claude 3.5 / Llama 3.1"]

KB["Knowledge Base

Retrieval"] --> M

M --> GO["Guardrails

Output Filter"]

GO -->|"Block or Redact"| ERR

GO -->|"Grounding check passed"| RESP["Response to User"]

CT["CloudTrail Log

All invocations"]

GI --> CT

GO --> CTBedrock Prompt Management: Version-Controlled AI at Scale

Prompt Management, released in late 2024, addresses a real operational headache: prompt engineering work being scattered across codebases, Lambda environment variables, and Confluence pages, with no audit trail and no rollback when a “prompt improvement” degrades production quality. With Prompt Management you store prompts in Bedrock as versioned resources, reference them by ARN in your application code, and promote versions from development to production through a controlled process.

This becomes critical at scale. A financial services client I work with has over 40 distinct prompts across their Bedrock applications. Without Prompt Management, a prompt change in one application would be invisible to the team managing another, leading to silent quality regressions. With Prompt Management, every change has an author, a timestamp, and a version number, and rollback is a single API call.

import boto3

bedrock_agent = boto3.client("bedrock-agent", region_name="us-east-1")

bedrock_rt = boto3.client("bedrock-runtime", region_name="us-east-1")

def get_prompt_and_invoke(prompt_arn: str, variables: dict, model_id: str) -> str:

"""

Retrieve a versioned prompt from Bedrock Prompt Management and invoke.

Prompt Management GA: November 2024.

prompt_arn: arn:aws:bedrock:us-east-1:<account>:prompt/<id>:<version>

"""

# Retrieve the prompt template

prompt_resp = bedrock_agent.get_prompt(

promptIdentifier=prompt_arn.split(":")[6], # prompt ID

promptVersion=prompt_arn.split(":")[-1] # version number

)

# Extract the template and substitute variables

template = prompt_resp["variants"][0]["templateConfiguration"]["text"]["text"]

for key, value in variables.items():

template = template.replace("{{" + key + "}}", value)

# Invoke the model with the resolved prompt

response = bedrock_rt.converse(

modelId=model_id,

messages=[{"role": "user", "content": [{"text": template}]}],

inferenceConfig={"maxTokens": 512, "temperature": 0.0}

)

return response["output"]["message"]["content"][0]["text"]

# Usage: reference prompt by ARN, variables resolved at runtime

result = get_prompt_and_invoke(

prompt_arn="arn:aws:bedrock:us-east-1:123456789012:prompt/ABCDEF123456:3",

variables={"document_type": "insurance claim", "jurisdiction": "New York"},

model_id="anthropic.claude-3-5-haiku-20241022-v1:0"

)

Cost Optimization: Making Bedrock Economics Work at Enterprise Scale

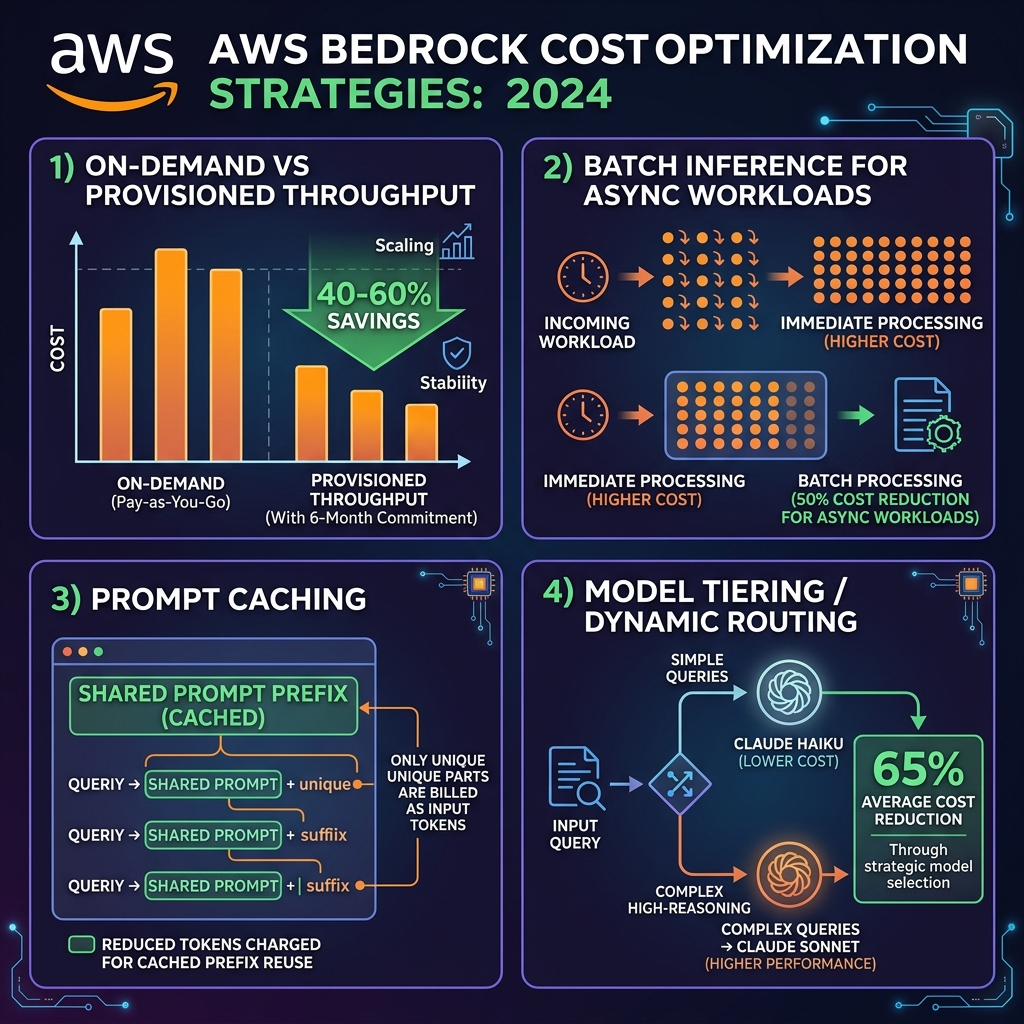

Generative AI costs have a way of surprising finance teams. A proof of concept at a few hundred API calls per day scales to production at tens of millions, and the per-call economics that seemed reasonable in testing can become unsustainable. AWS provides four levers to control this.

On-Demand vs Provisioned Throughput

On-demand pricing charges per input and output token with no minimum commitment — correct for development, variable workloads, and early production. Provisioned throughput commits you to a minimum number of Model Units (each representing a rate of token throughput) for either 1 or 6 months, in exchange for significantly reduced per-token rates. For a consistent production workload processing, say, 50 million tokens per day, a 6-month provisioned throughput commitment typically reduces costs by 40-60% compared to on-demand. The break-even point is typically around 70% utilisation — if your workload is spiky or unpredictable, on-demand remains superior.

Batch Inference: 50% Discount for Non-Real-Time Workloads

Batch inference processes requests asynchronously at lower priority, with outputs delivered to S3. AWS charges roughly 50% of on-demand rates for batch inference jobs. This is ideal for nightly document analysis, content moderation queues, embedding generation for large document ingestion, and any workload where a response within minutes is acceptable rather than milliseconds. In one deployment, migrating a nightly contract review pipeline from real-time to batch inference reduced monthly Bedrock costs from $18,400 to $9,200.

Prompt Caching: Reusing Expensive Prefixes

Prompt caching, available for Claude models since October 2024, stores the key-value (KV) cache of a designated prompt prefix so it need not be reprocessed on subsequent requests. The economics: cached tokens cost ~10% of regular input token pricing. For applications with long, stable system prompts — think a 10,000-token legal or medical context block that precedes every question — prompt caching can reduce effective input token costs by 80-90% for repeat requests within the cache window.

import boto3

import json

bedrock = boto3.client("bedrock-runtime", region_name="us-east-1")

# Prompt caching: mark the stable system prompt prefix for caching

# Supported by Claude models from October 2024

SYSTEM_DOCS = """

[10,000 tokens of enterprise policy documents, regulatory guidance,

product catalog, and standard operating procedures...]

"""

def invoke_with_prompt_cache(user_question: str) -> dict:

"""

Use prompt caching to amortise the cost of a large, stable context block.

The system prompt prefix is marked with "cachePoint" — first call processes

it fully (regular token cost); subsequent calls within 5-minute cache window

pay ~10% of input token rate for the cached portion.

"""

response = bedrock.converse(

modelId="anthropic.claude-3-5-sonnet-20241022-v2:0",

system=[

{

"text": SYSTEM_DOCS,

"guardContent": {

"text": {"qualifier": "grounding_source"}

}

}

],

messages=[

{"role": "user", "content": [{"text": user_question}]}

],

additionalModelRequestFields={

"anthropic_beta": ["prompt-caching-2024-07-31"]

},

inferenceConfig={"maxTokens": 1024, "temperature": 0.1}

)

usage = response["usage"]

return {

"answer": response["output"]["message"]["content"][0]["text"],

"input_tokens": usage["inputTokens"],

"output_tokens": usage["outputTokens"],

"cache_read_tokens": usage.get("cacheReadInputTokens", 0),

"cache_write_tokens": usage.get("cacheWriteInputTokens", 0)

}

Production Architecture Patterns

Cross-Region Inference: Resilience and Capacity

Cross-region inference, available since mid-2024 for Claude and Llama models, automatically routes your requests across multiple AWS regions when your primary region’s model capacity is constrained. You use a cross-region inference profile ARN instead of a model ARN, and Bedrock handles routing transparently. This is essential for production systems with latency SLAs, as model capacity throttling — particularly for newer, heavily used models like Claude 3.5 Sonnet — can cause unexpected API timeouts during peak hours.

arn:aws:bedrock:us-east-1::foundation-model/us.anthropic.claude-3-5-sonnet-20241022-v2:0. The us. prefix activates cross-region routing across us-east-1, us-west-2, and us-east-2. Data in transit between regions is encrypted; check your compliance requirements around cross-region data movement for regulated workloads.Observability: What You Must Log

Every Bedrock production deployment needs CloudWatch logging configured at the model invocation level. Enable invocation logging in the Bedrock console — it captures: the full prompt (if required for debugging), the model response, the model ID, input/output token counts, latency, and the stop reason. Pair this with X-Ray tracing through your application stack to connect individual Bedrock calls to the upstream user request that triggered them.

CloudTrail logs all control-plane API calls (creating/modifying knowledge bases, agents, guardrails) automatically. For data-plane calls (individual model invocations), invocation logging is opt-in. Enable it from day one — retroactively enabling logging for a live production system requires care to avoid logging PII you have not yet established a retention and handling policy for.

Platform Selection: When to Choose Bedrock

Choose Bedrock when you need multi-model access and want the ability to switch or A/B test models without rewriting application logic; when you want managed RAG infrastructure without running a vector database fleet; when enterprise safety controls (Guardrails) are a compliance requirement; when AWS IAM, VPC, CloudTrail, and CloudWatch integration with your existing security posture matters; or when you want access to Amazon’s managed agent and prompt management capabilities.

Consider alternatives when your use case requires a model not on the Bedrock catalogue (GPT-4o as of this writing is not available via Bedrock); when absolute data residency means no cloud API calls are acceptable; or when you need maximum control over model behaviour through direct access to weights.

Key Takeaways

- The Converse API should be your default interface for all Bedrock model invocations — it provides true model portability and consistent error handling across providers

- Semantic or hierarchical chunking in Knowledge Bases consistently outperforms fixed-size chunking for enterprise document retrieval; the compute premium is justified at production scale

- Bedrock Agents with Inline Agents are now production-ready for multi-tenant SaaS architectures where each tenant needs isolated agent configuration

- Guardrails must be configured before go-live in any regulated industry deployment; they are not optional compliance theatre — they are the technical control evidence auditors will ask for

- Prompt caching for Claude models can reduce input token costs by 80-90% for applications with stable, large system prompts — evaluate immediately for any application with context blocks over 2,000 tokens

- Cross-region inference is the correct default for production Claude 3.5 Sonnet invocations today; model capacity throttling in a single region is a real operational risk

Discover more from C4: Container, Code, Cloud & Context

Subscribe to get the latest posts sent to your email.