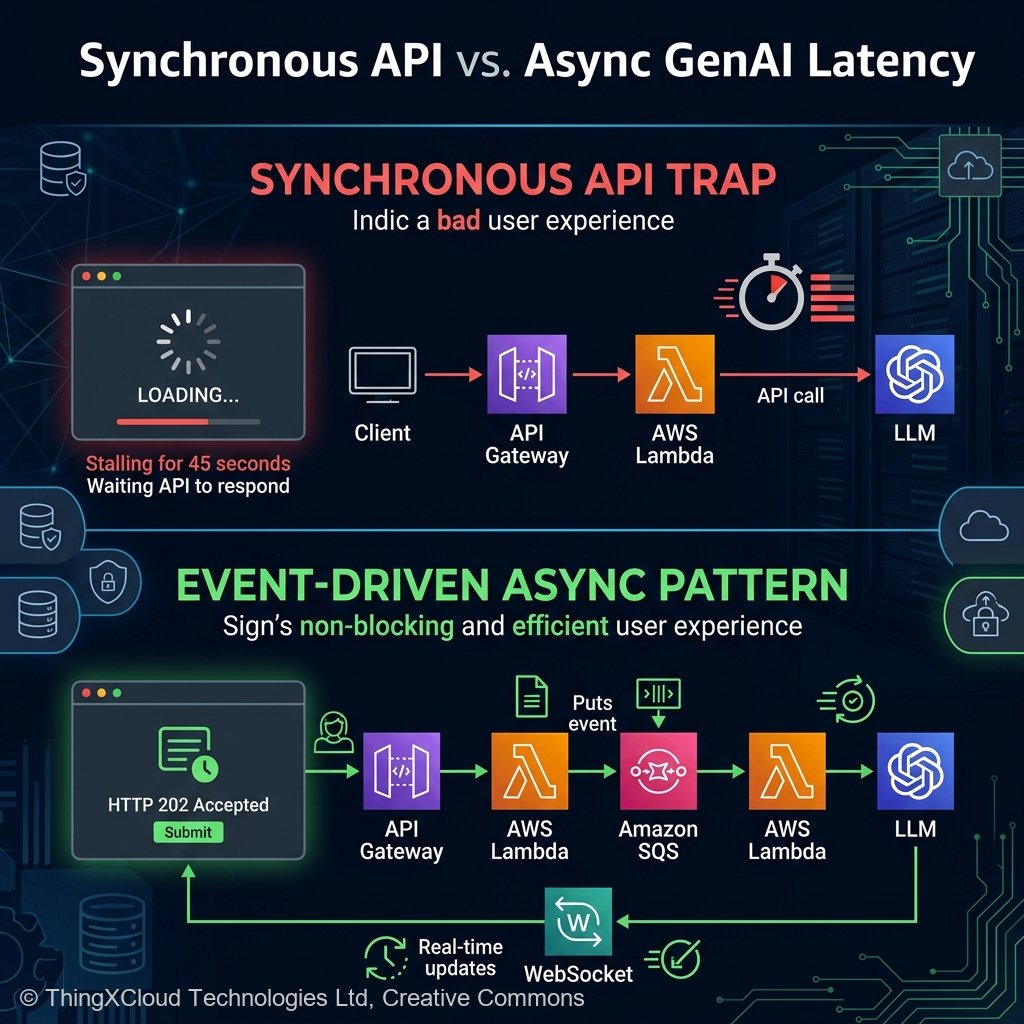

In the rush to integrate Generative AI throughout 2024 and 2025, enterprise engineering teams consistently fell into the same architectural trap: treating Large Language Models like standard REST APIs. Developers would wire a React frontend directly to an API Gateway, pointing to an AWS Lambda function that executed a synchronous InvokeModel call to Amazon Bedrock.

For a simple 10-token prompt, that pattern holds. But when a user asks the LLM to process a 400-page dense PDF utilizing Claude 3.5 Sonnet to generate an intricate quarterly financial report, the inference latency predictably stretches into the 30-45 second range. The API Gateway hits its mandatory 29-second hard timeout limit, the Lambda function crashes via `TimeoutException`, the React client receives a 504 Gateway Timeout, and the end-user stares at a permanently hung loading spinner.

By early 2026, the industry has universally adopted the Event-Driven GenAI Pattern. We no longer force frontends to await LLMs synchronously. Instead, we heavily leverage Amazon EventBridge, DynamoDB Streams, and AWS Step Functions to decouple the cognitive generation from the immediate user interface. This article breaks down exactly how to architect robust, asynchronous foundation model operations on AWS.

The Synchronous Trap vs The Asynchronous Solution

The core mandate of modern AI UI development is returning an HTTP 202 Accepted instantly. When a user submits a massive data-generation request, the frontend API should not execute the generation logic. It should simply write the raw parameters of the request (User ID, Prompt Context, Status=’PENDING’) into a durable data store like Amazon DynamoDB, and immediately return a Job ID backward to the client.

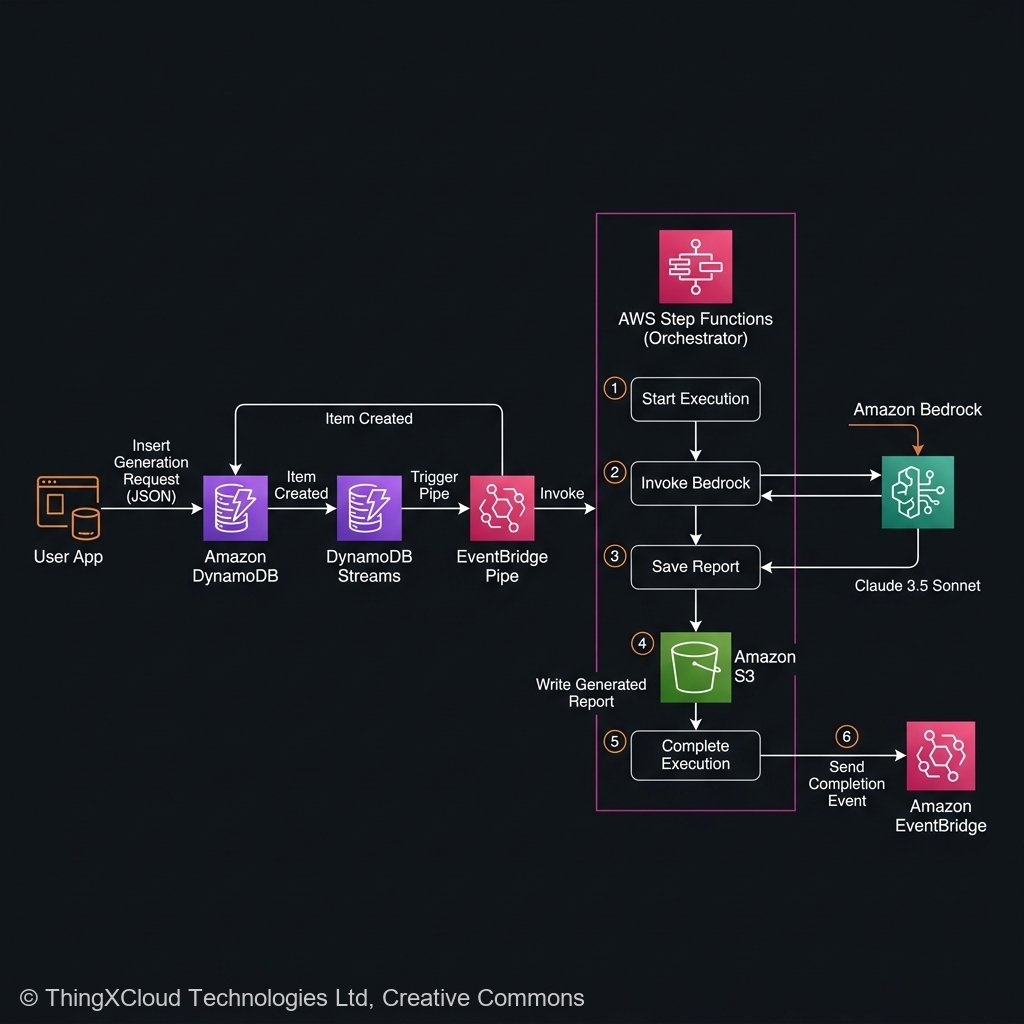

The Orchestrator: EventBridge Pipes and Step Functions

Once the job is durable, the AWS Event-Driven ecosystem takes over. An Amazon DynamoDB Stream instantly detects the database INSERT event and forwards it to Amazon EventBridge Pipes. EventBridge Pipes then directly triggers an AWS Step Function Execution passing in the Job ID.

Why Step Functions instead of a raw Lambda? Because LLM requests can be flaky. If Amazon Bedrock hits a temporary `ThrottlingException` because of regional load, an AWS Step Function natively handles retry strategies with exponential backoff without racking up passive Lambda billing loops or dropping the user payload.

Implementing the Asynchronous Pattern

To construct this pipeline, we declare the Bedrock invocation synchronously *only* from the perspective of the Step Function task, while the user remains completely decoupled.

flowchart TD

UI["Frontend UI"] -->|"1. POST Request"| API["API Gateway"]

API -->|"2. Write Job (Status: PENDING)"| DDB[("DynamoDB")]

API -.->|"3. Return HTTP 202 (Job ID)"| UI

DDB -->|"4. DynamoDB Stream"| EB["EventBridge Pipe"]

EB -->|"5. Trigger Workflow"| SF{"AWS Step Functions"}

SF -->|"6. InvokeModel (Long Polling)"| BD["Amazon Bedrock (Claude)"]

BD -->|"7. Return Text"| SF

SF -->|"8. Update Job (Status: DONE)"| DDB

SF -->|"9. Emit Completion Event"| EB_OUT["EventBridge Bus"]

EB_OUT --> WSS["IoT Core / API Gateway WebSockets"]

WSS -.->|"10. Push Notification"| UIStep 1: The Step Function State Machine

In 2026, AWS Step Functions supports native integrations with Amazon Bedrock via the Optimized Integration SDK. You don’t even need to write an AWS Lambda function to trigger the LLM. You configure the state machine directly using Amazon States Language (ASL):

{

"StartAt": "InvokeBedrock",

"States": {

"InvokeBedrock": {

"Type": "Task",

"Resource": "arn:aws:states:::bedrock:invokeModel",

"Parameters": {

"ModelId": "anthropic.claude-3-5-sonnet-20241022-v2:0",

"Body": {

"anthropic_version": "bedrock-2023-05-31",

"messages": [

{

"role": "user",

"content": [

{

"type": "text",

"text.$": "$.UserPrompt"

}

]

}

],

"max_tokens": 4096

}

},

"Retry": [

{

"ErrorEquals": [

"Bedrock.ThrottlingException",

"Bedrock.InternalServerException"

],

"IntervalSeconds": 3,

"MaxAttempts": 4,

"BackoffRate": 2.0

}

],

"ResultPath": "$.BedrockResult",

"Next": "UpdateDynamoDBData"

},

"UpdateDynamoDBData": {

"Type": "Task",

"Resource": "arn:aws:states:::dynamodb:updateItem",

"Parameters": {

"TableName": "LLM_Jobs",

"Key": {

"JobId": {"S.$": "$.JobId"}

},

"UpdateExpression": "SET JobStatus = :status, ResultData = :result",

"ExpressionAttributeValues": {

":status": {"S": "COMPLETED"},

":result": {"S.$": "$.BedrockResult.Body.content[0].text"}

}

},

"End": true

}

}

}

Step 2: Pushing Notifications to the Frontend

While the Step Function is executing, the React UI holds the Job ID and visually indicates that work is processing (e.g., using skeleton loaders or a generating status).

There are two primary ways to retrieve the generated response once the job updates to `COMPLETED`:

- Client Polling (Acceptable, Not Ideal): The React component sets an interval and queries the API every 3 seconds (`GET /job/{id}`) until it returns the finalized dataset. This is easy to build but generates massive unnecessary HTTP traffic.

- WebSocket Push (The 2026 Enterprise Standard): When the Step Function updates the final record in DynamoDB, publish a routing event to an EventBridge Custom Bus. A small Lambda listener catches that event and pushes the exact JSON payload down to the specific user via an Amazon API Gateway WebSocket connection or AWS IoT Core MQTT subscription.

Observability in an Asynchronous World

The obvious downside to asynchronous event-driven architectures is that debugging becomes exponentially harder. When an HTTP 500 error occurs in a synchronous API, you have one server log map. In Event-Driven GenAI, the failure point might exist in the API layer, the DynamoDB Stream trigger, the EventBridge routing rule, the Step Function execution, or the Bedrock API.

Because of this, AWS X-Ray trace propagation is mandatory. When the initial API request lands, you must ensure your API Gateway injects the `X-Amzn-Trace-Id` into the initiating DynamoDB row payload. You then explicitly map that same Trace ID across your Step Function execution.

Key Takeaways

- Synchronous AI is dead. Never tie a user interface HTTP request explicitly to an LLM invocation. Always write the intent to a durable queue or NoSQL table and execute asynchronously.

- Leverage the Native State Machine. Replace bridging Lambda functions entirely using AWS Step Functions optimized integrations for Amazon Bedrock, utilizing ASL-level retry configurations to handle Bedrock throttling seamlessly.

- WebSockets complete the circle. To maintain a hyper-responsive user experience, push completion states down from EventBridge Custom Buses over API Gateway WebSockets rather than relying on endless frontend client polling.

- Enforce Idempotency. The distributed, asynchronous architecture guarantees high availability but introduces the risk of duplicated events. Shield your AWS bill by ensuring your Step Functions validate job execution states before burning tokens making inference API calls.

- Decouple Payload passing. Do not attempt to route heavy foundation model responses directly through EventBridge JSON events (256KB constraint); store text arrays to S3 and pass references back to clients.

Glossary

- Asynchronous Event-Driven Architecture

- A distributed system design where software components execute independently, communicating primarily through published events or queues rather than awaiting immediate synchronous responses.

- Amazon EventBridge Pipes

- A serverless integration resource that establishes direct, continuous data streams between event producers (like a DynamoDB Stream) and downstream targets (like an AWS Step Function), removing the need for intermediate polling logic.

- Step Functions Optimized Integration

- The native capability for AWS Step Functions to communicate directly with AWS APIs (such as `bedrock:invokeModel`) without requiring a custom developer-written AWS Lambda execution translation script.

- Idempotency

- A system quality ensuring that an operation can be applied multiple times without changing the result beyond the initial application, critical for protecting systems from duplicated event invocations.

- WebSocket API

- A persistent, bi-directional communication protocol natively hosted in Amazon API Gateway, allowing server-side resources to immediately push data payloads back to a web client.

References & Further Reading

- → Amazon EventBridge Architecture Hub— Official engineering documentation regarding event buses, routing rules, and the strict 256KB payload limitations.

- → AWS Step Functions Bedrock Integration— Detailed ASL payload configurations and parameter mappings for invoking foundations models natively from state machines.

- → Building WebSocket APIs on AWS— The definitive architectural design pattern for pushing async inference completion notifications backward to frontend clients.

- → DynamoDB Streams Guide— Configuring low-latency capture of item-level modifications for directly instigating subsequent AI processing workflows.

Discover more from C4: Container, Code, Cloud & Context

Subscribe to get the latest posts sent to your email.